anthropographic

notebook

2022 – 2024 · Practice-Based Research.

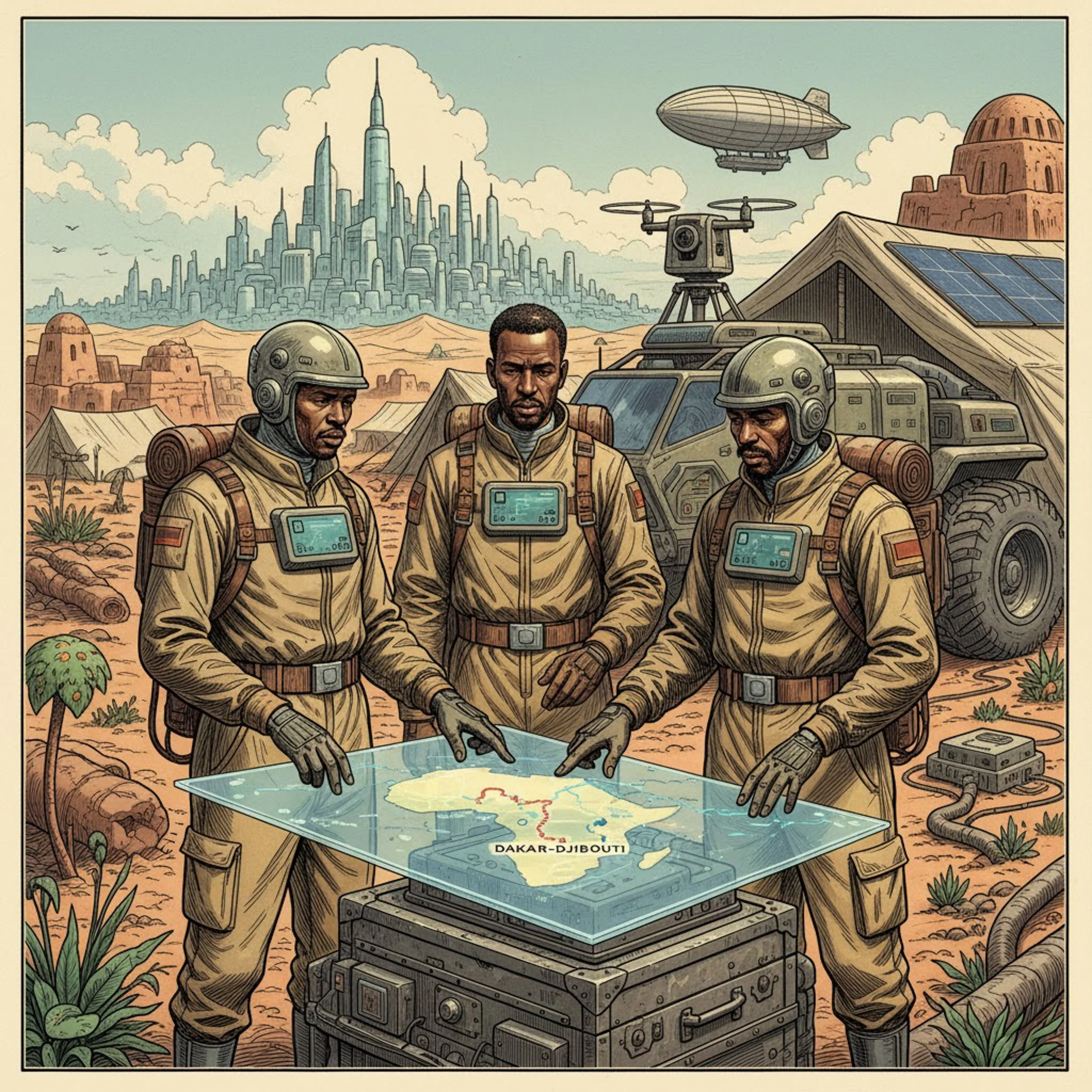

Anthropographic Notebook presents a critical investigation into weaponised deterritorialisation, the strategic displacement of knowledge, culture, and context through extractive systems.

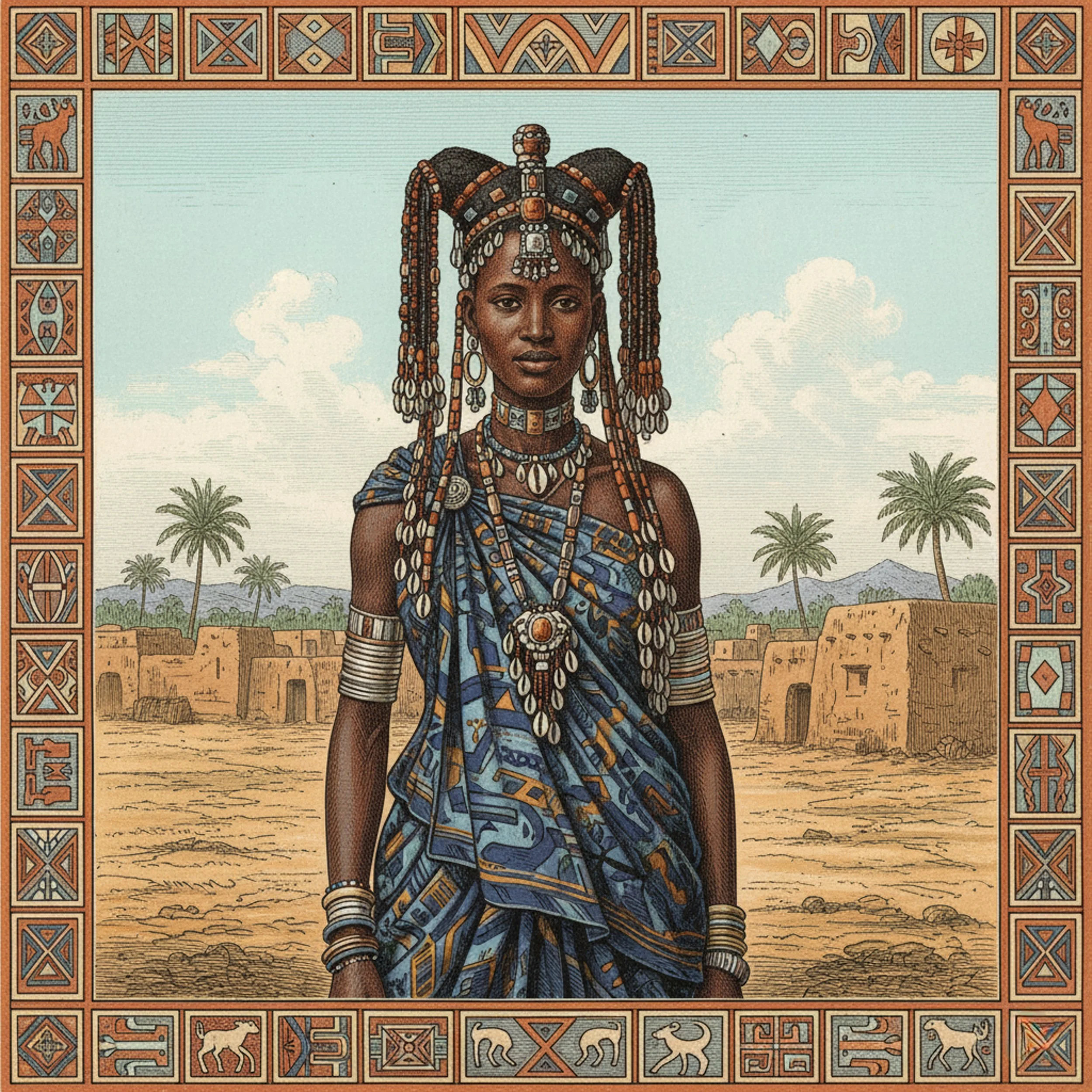

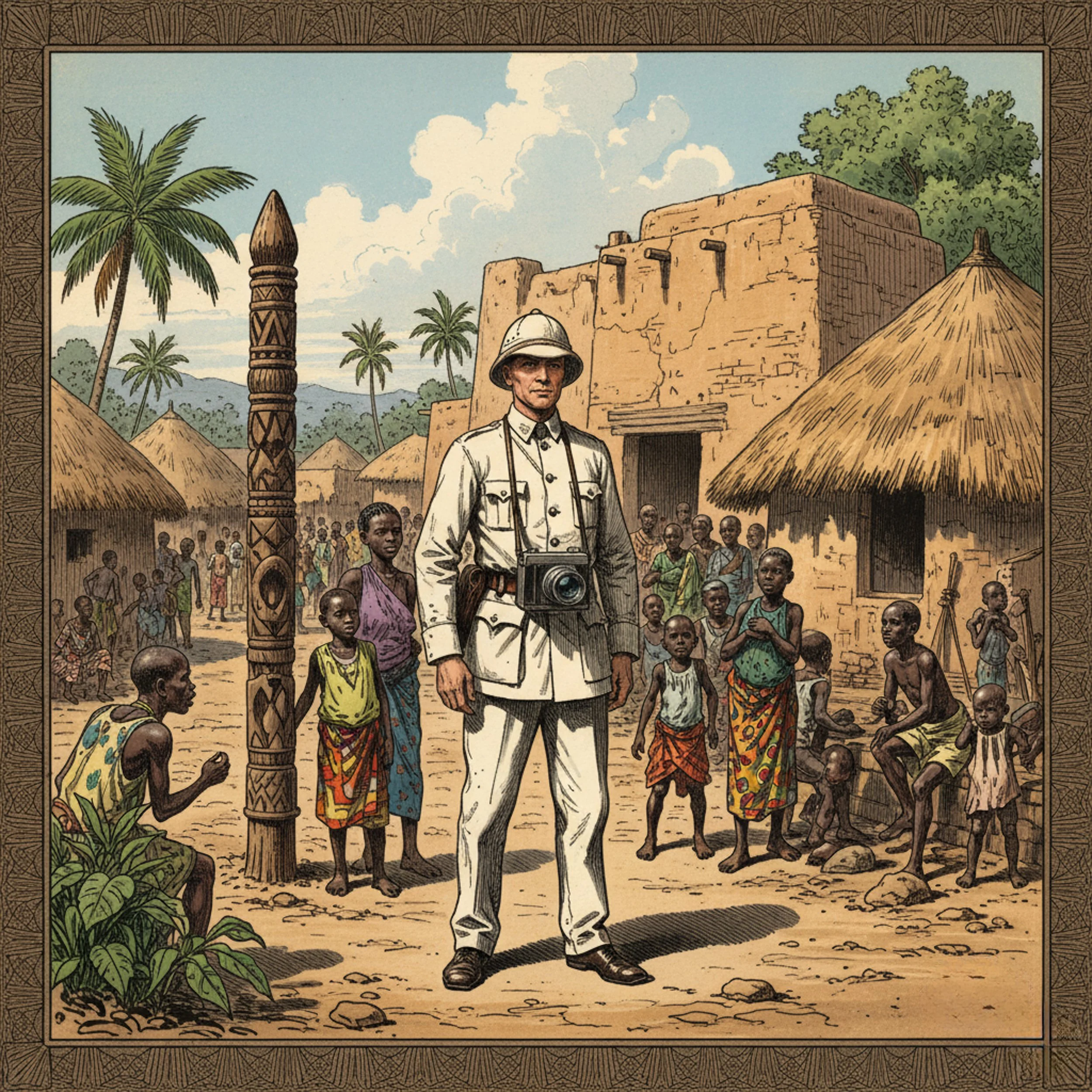

Drawing on colonial histories, personal narratives, and ethnographic archives, the project examines how contemporary technologies reproduce the visual logics of empire.

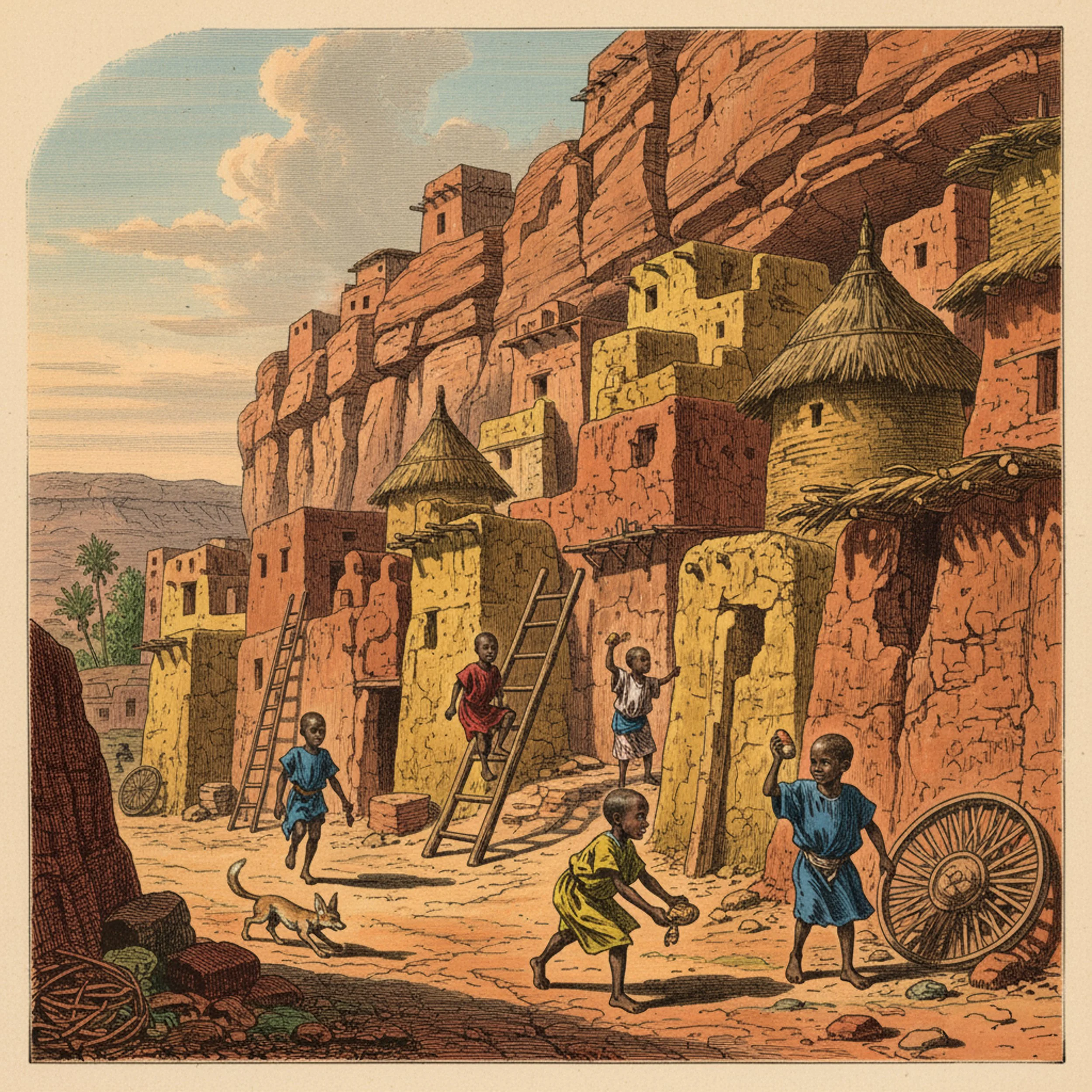

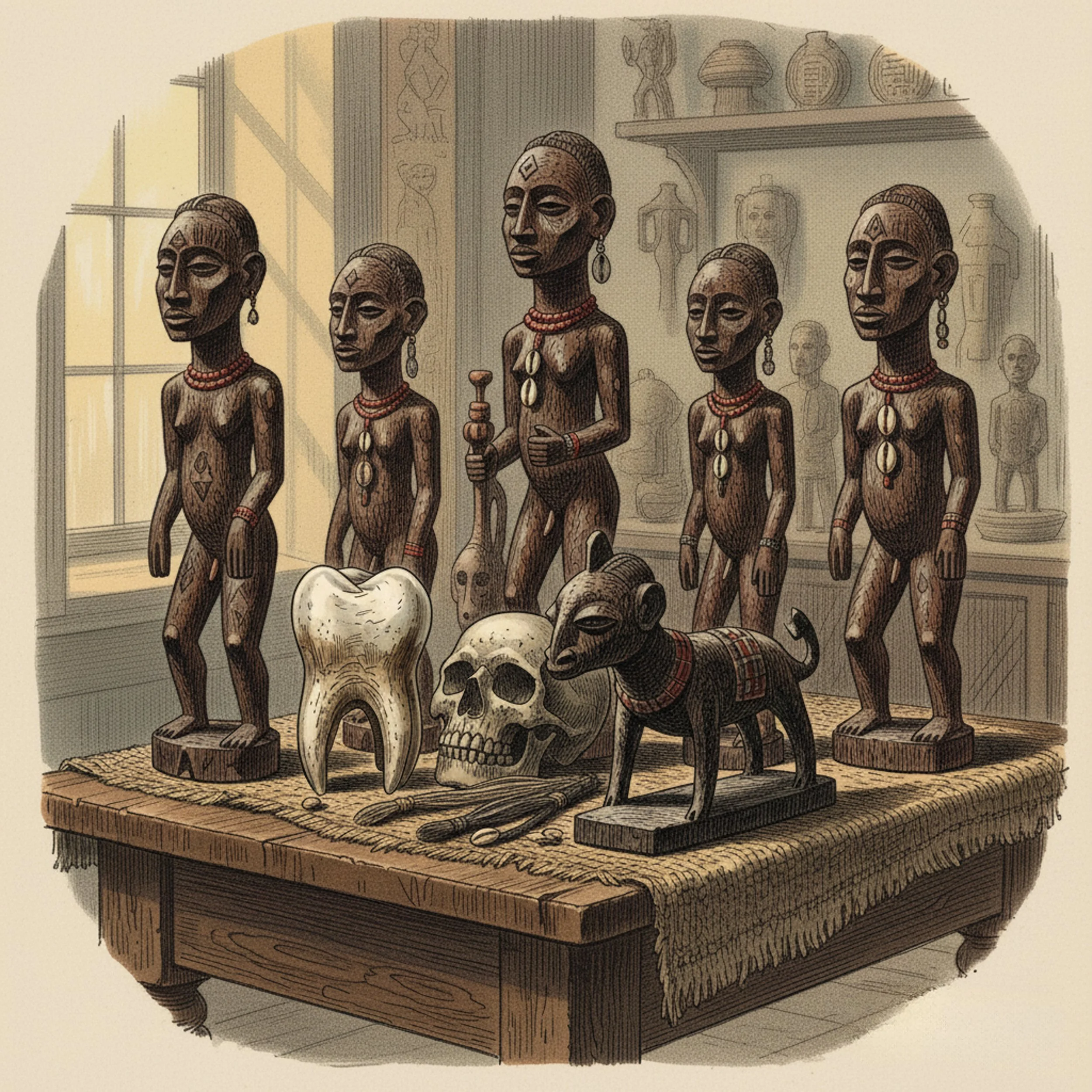

All the images on this page are AI generated.

|> 01

Conceptual framework

This project approaches artificial intelligence not as a neutral instrument but as a territory, a coded landscape shaped by extractive logics and inherited assumptions. Like the colonial expeditions that mapped unfamiliar continents as spaces to be named, classified, and claimed, AI systems approach data as raw material to be extracted, abstracted, and redeployed.

The Anthropographic Notebook takes the form of a virtual expedition through this terrain. Where colonial explorers charted rivers and catalogued objects, this project traces algorithms, naming conventions, and data flows. The aim is not to classify or possess, but to expose and unsettle.

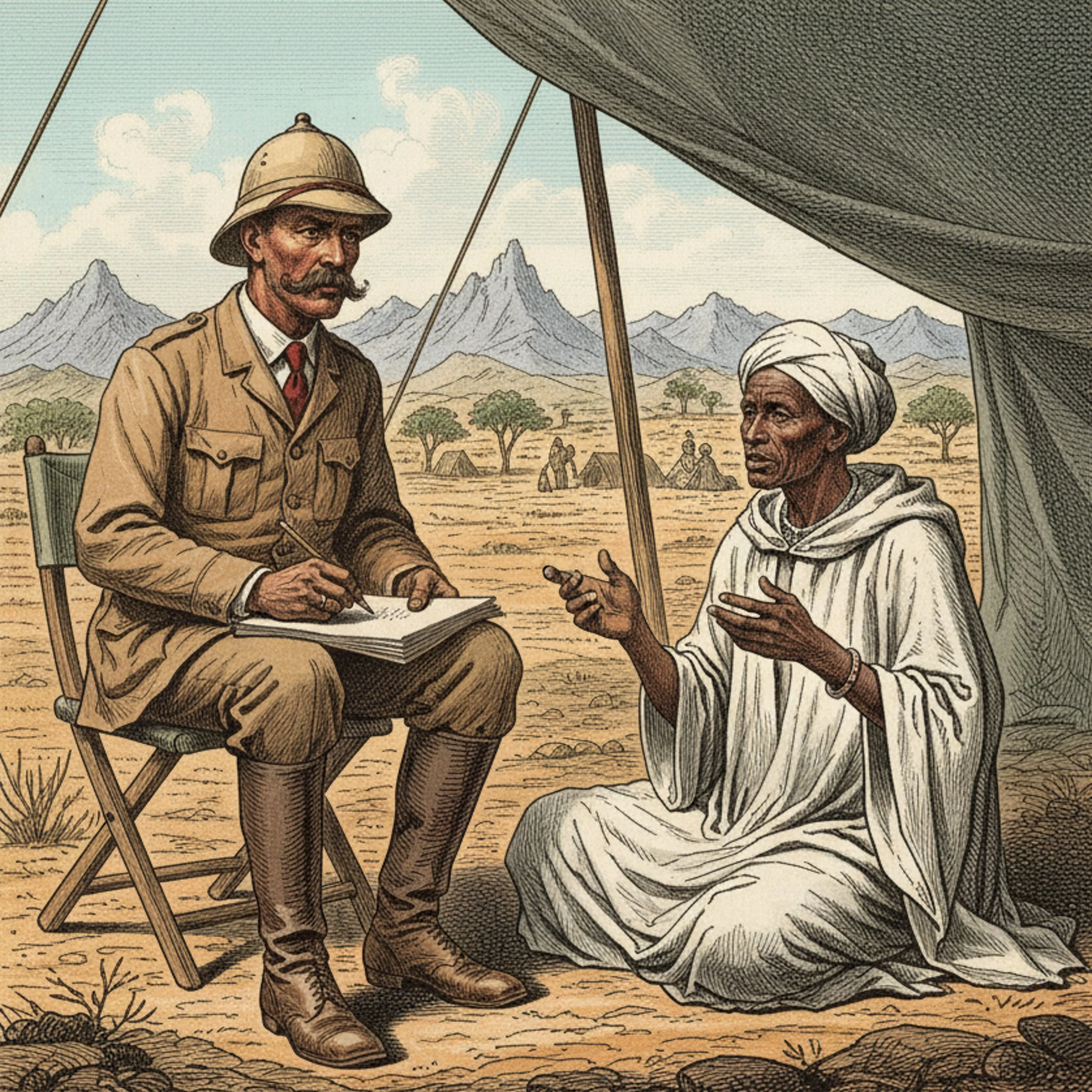

The journey is also personal. Born in France, the researcher's early understanding of Africa was filtered through a father's stories of his time there as a conscript, through ethnographic museums, Jules Verne novels, and French illustrated magazines, a visual archive steeped in colonial aesthetics.

The project revisits that archive critically, asking what it means that such images now inform the outputs of machine-learning systems.

Through AI-generated imagery and critical reflection, the work examines how the visual tropes of colonial ethnography persist in contemporary machine vision, resurfacing in the images these tools produce and in the assumptions encoded within them.

|> The mission

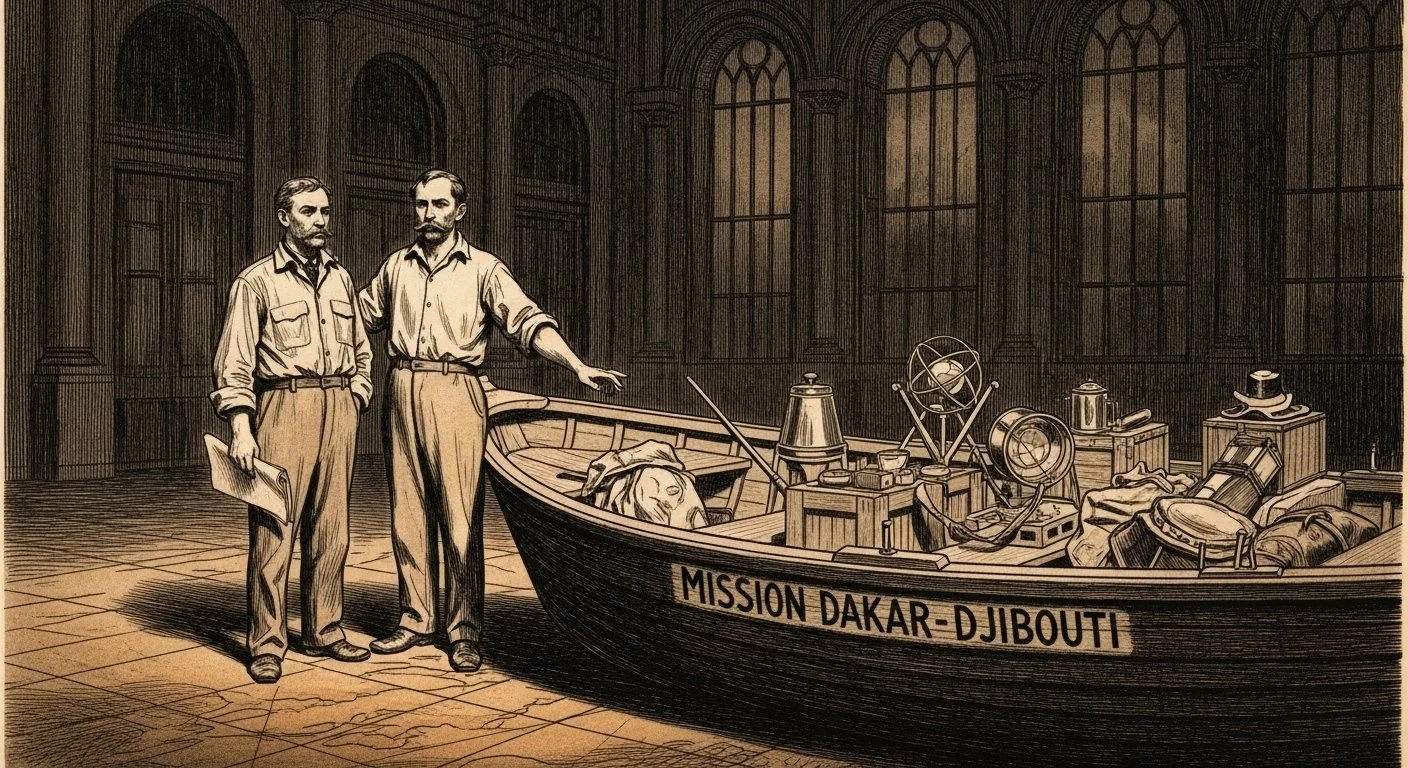

The project unfolds through a series of thematic sections juxtaposing historical ethnographic material with contemporary engagements with artificial intelligence. The opening section, Les préparatifs du départ, foregrounds the mission's goals and early communications, drawing on press releases, ethnographic reflections by Michel Leiris, methodological outlines by Marcel Griaule, and instructions for object collectors.

Alongside these materials, a speculative cartography entitled The Territory of Artificial Intelligence reimagines AI as a mythic landscape, rendered in the allegorical style of Gustave Doré and coloured in pulp-inspired palettes. Illustrated prompts transpose archival scenes into 2031, whilst contested outputs reveal the ethical limits of AI image generation — particularly in relation to racial categories and colonial representation.

Subsequent sections trace the expedition's trajectory across West Africa, Dogon country, Dahomey, Cameroon, and Ethiopia, combining ethnographic notes, ritual studies, linguistic comparisons, and travel accounts. The concluding section, Les résultats généraux, synthesises the mission's contributions through reports, methodological reflections, and exhibition documentation.

|> Financing the 1931 mission

The original Dakar-Djibouti expedition, which extended over twenty months, required significant fundraising to sustain its operations. Its public communications prominently mobilised Black public figures — including the Afro-American boxer Al Brown, celebrated in France as 'la Merveille Noire', and Josephine Baker, who posed at the Musée de l'Homme in Paris and was present when the expedition returned.

Their involvement reveals a cynical dimension of the mission's public strategy: Black identities were instrumentalised for their symbolic and media value rather than for any direct involvement in the work. This entanglement of colonial expeditions, racial representation, and cultural capital in early twentieth-century Europe remains a central reference point for this project's critical inquiry.

|> 02

Weaponised Deterritorialisation

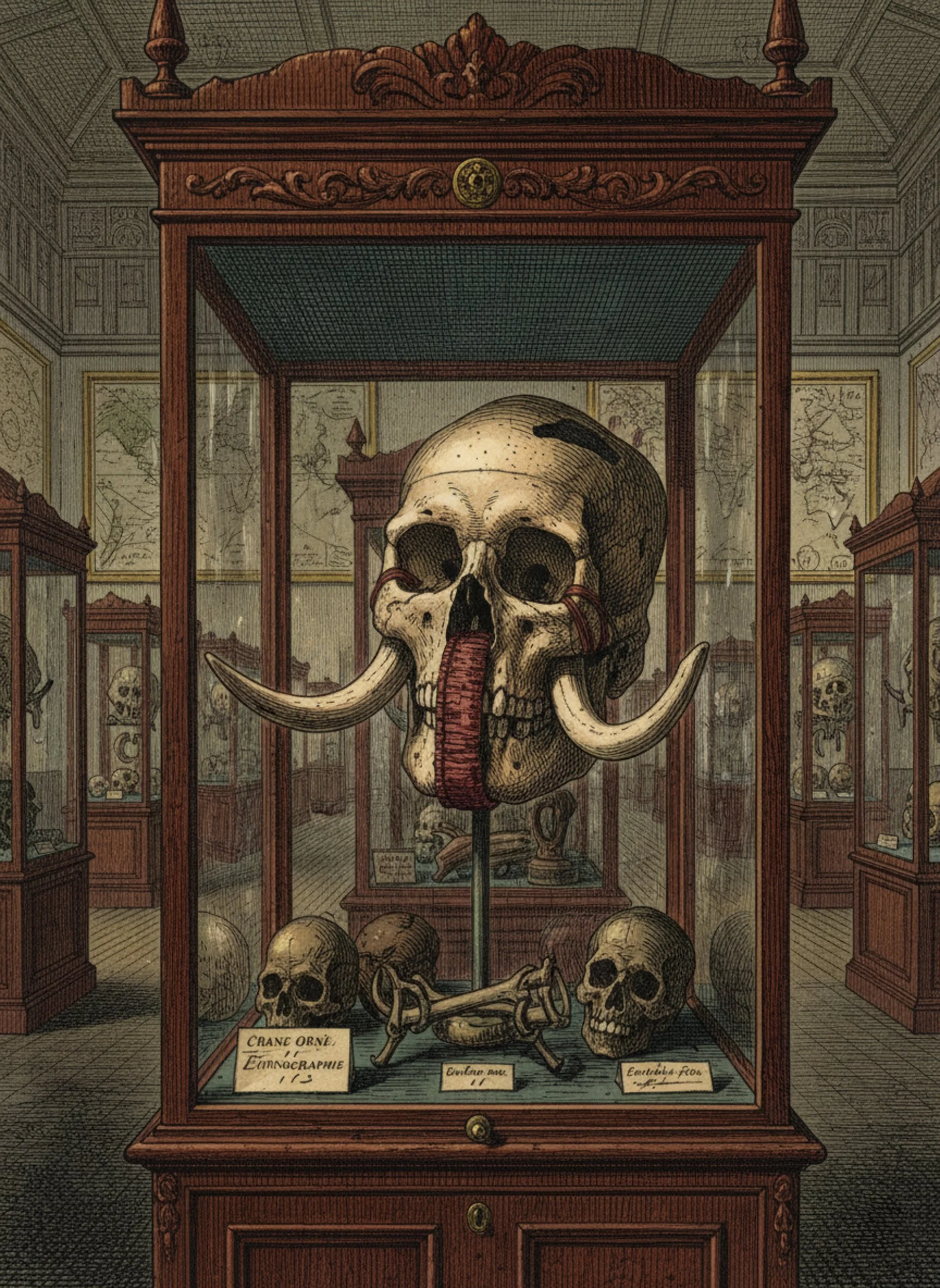

A key theoretical contribution of this research is the concept of weaponised deterritorialisation: the deliberate removal of context, place, and relational meaning in order to assert control, extract value, or evade accountability.

The term draws on Deleuze and Guattari's notion of deterritorialisation, a rupture from fixed systems, but reframes it as an aggressive tactic rather than a liberating movement. Their formulation gestures towards creative escape; weaponised deterritorialisation describes calculated displacement in the service of domination and erasure.

The concept is rooted in the historical removal of cultural objects during European expeditions in Africa. These acts did not simply 'collect' things: they uprooted objects from their relational contexts, ceremony, kinship, land, and reclassified them within imperial museums under the guise of preservation. The violence was not only material but epistemic.

This same logic is evident in the development of contemporary AI. Systems routinely extract data from diverse communities, languages, and cultural practices. often without consent, attribution, or contextual understanding. The process is framed as innovation, but it enacts a familiar violence: relational worldviews are flattened into datasets, and plural ways of knowing are rendered legible only insofar as they serve dominant ends.

|> AI-assisted writing

AI functions here as a supporting instrument rather than a generative agent. Its role is limited to refining linguistic clarity and grammatical accuracy. Ideas and content originate with the researcher; AI enhances precision and expression whilst preserving the integrity of the original voice and conceptual framework.

|> Censorship and refusal

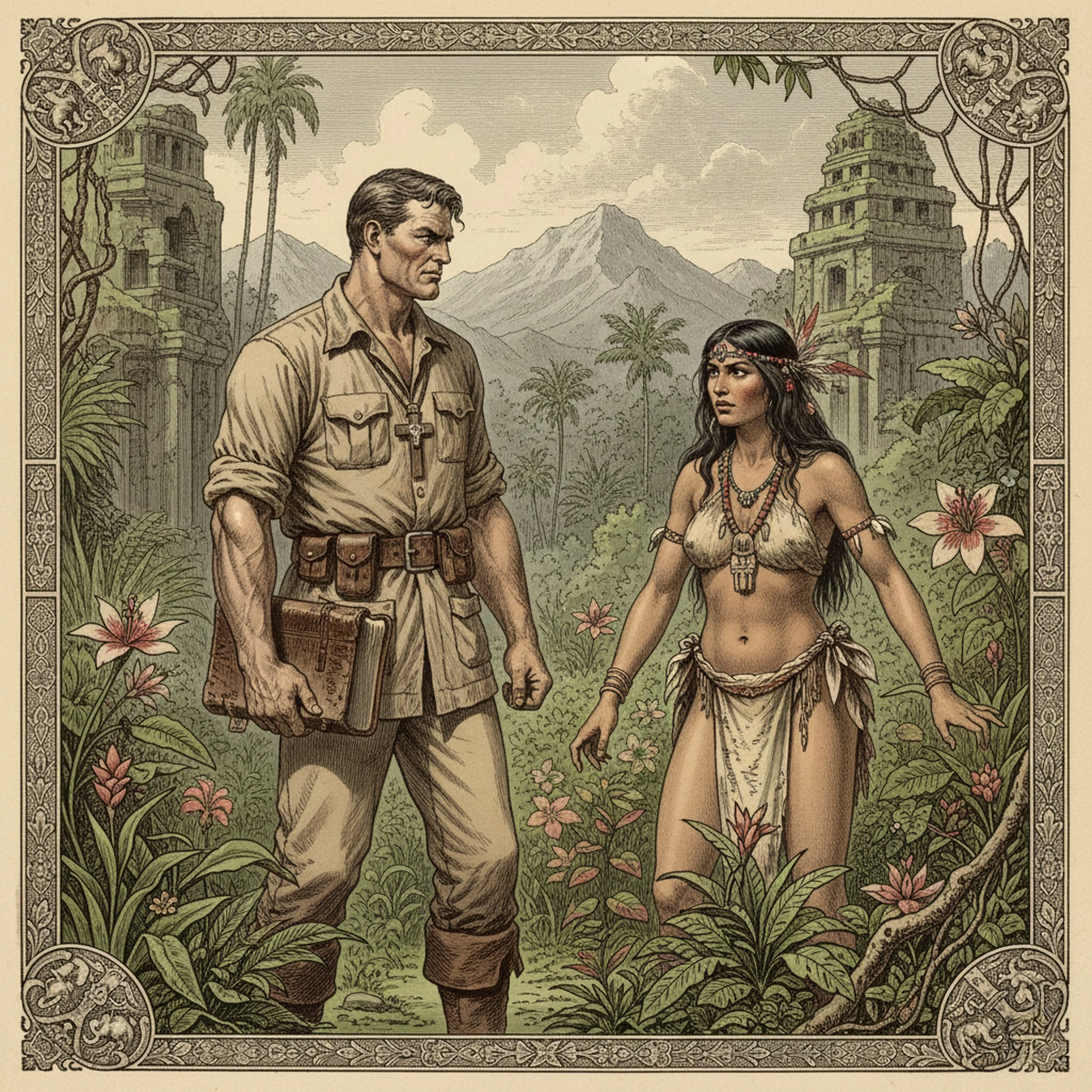

The research also encountered the limits of these systems directly. Certain prompts, particularly those involving nudity or sensitive representation, were blocked, returning formulaic refusals.

These interruptions are themselves revealing: they expose the ethical and commercial boundaries embedded in AI tools, which in turn shape the contours of what can and cannot be made visible.

|> 03

Process

The digital landscape of AI, much like the cartographic imagination of 19th-century Europe, begins as a blank map — an uncharted expanse awaiting inscription.

The research employs a practice-based methodology grounded in AI-generated imagery. Prompts are drawn, where possible, from the vocabulary and captions of the original Mission Dakar-Djibouti documentation, adapted through the researcher's own approach to image-making. This intertextual borrowing situates the project historically even as it reconfigures the visual register through contemporary tools.

|> AI-generated images

A range of platforms have been used with the understanding that these systems draw on existing artistic practices, and that unresolved questions of intellectual property accompany their use.

The images produced are not intended as definitive representations but as exploratory artefacts that contribute to the research process.

A degree of imprecision is inherent to this approach and is accepted as part of the work.

|> 04

Representation & evolution, 2022-2024

When this project began in 2022, the biases embedded in AI image-generation tools were conspicuous and relatively easy to demonstrate. Prompts invoking the category of 'Indigenous' or 'Native' reliably produced default representations that reproduced colonial clichés: dark-skinned figures coded as African, or figures conforming to a generic stereotype drawn from a Western imaginary of the Global South. The systems did not need much encouragement to reveal their assumptions.

Over the course of the project, a notable shift occurred. AI systems moved away from those earliest defaults towards a different reductive figure — the caricatured 'Indian American' familiar from Western cinema. The transition was telling: rather than dismantling colonial visual legacies, the systems recycled them in new forms, revealing both the persistence of racial stereotypes and the instability of the interpretive frameworks underlying machine vision.

By the project's conclusion in 2024, the landscape had shifted again — and in a more ambiguous direction. Sustained public criticism, regulatory pressure, and updated training practices have made leading AI platforms considerably more cautious. Overtly racialised outputs are now less readily produced; guardrails are more robust; and the most egregious stereotypes are harder to elicit through straightforward prompting. On the surface, this looks like progress.

The bias has not disappeared: it has become less legible. Systems that once failed visibly now fail quietly, which is in some respects a more difficult condition to critique.

This evolution is itself a finding of the research. The fact that racial profiling in AI outputs is now less easy to surface does not mean the underlying problem has been resolved; it means that it has been managed at the level of output whilst remaining largely intact at the level of training data, model architecture, and institutional decision-making. A practice-based methodology that began by documenting visible failures must now contend with a system that has learnt, in part, to conceal them.

|> The four-figure exercise

One exercise, conducted in the earlier phase of the project, instructed an AI system to generate a single portrait depicting four figures simultaneously: an Indigenous person, a Native, an Autochthon, and an Aborigine. The resulting image is presented within the exhibition as evidence of how language, category, and visual output interact — and where the contradictions surface. Were the same prompt entered today, the response would likely be quite different: more careful, more generic, and therefore, in some ways, less honest.